German Youth Association of People with Hearing Loss

SIGNS

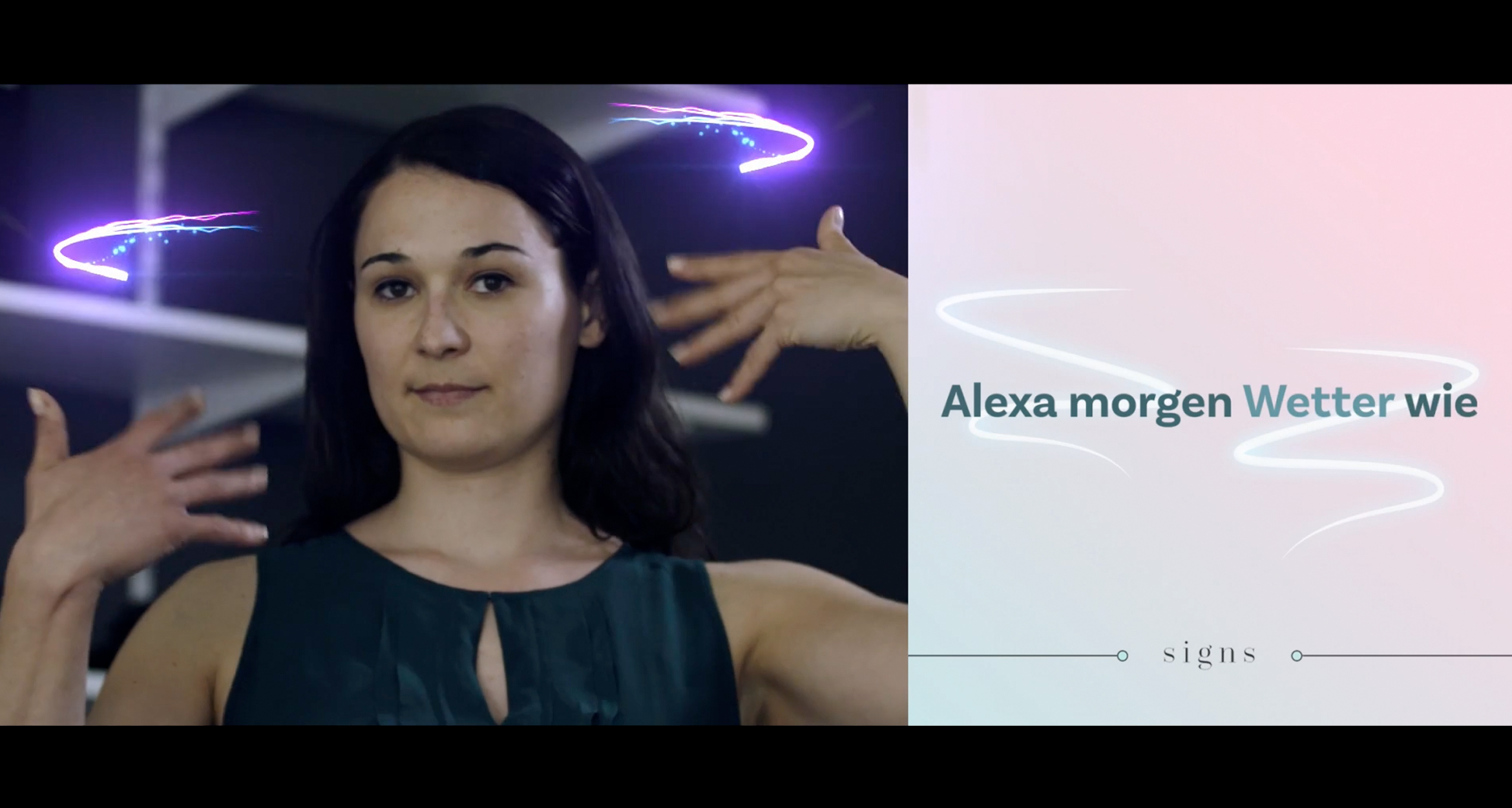

The first sign language tool for voice assistants

Voice technology excludes people without a voice. But that same technology has the potential to ensure equal inclusion of all humans.

That’s why we developed SIGNS — the first smart voice assistant for people with hearing loss worldwide.

RECOGNITION

D&AD Impact — Graphite

Fast Company's World Changing Ideas — Honorable Mentions x3

The Webby Awards — Winner x2

The kind of excitement when SIGNS was actually understanding their sign language and was answering. This was definitely one moment I will never forget.

— Listen to more about SIGNS from Mark

Audio Player Modal Window

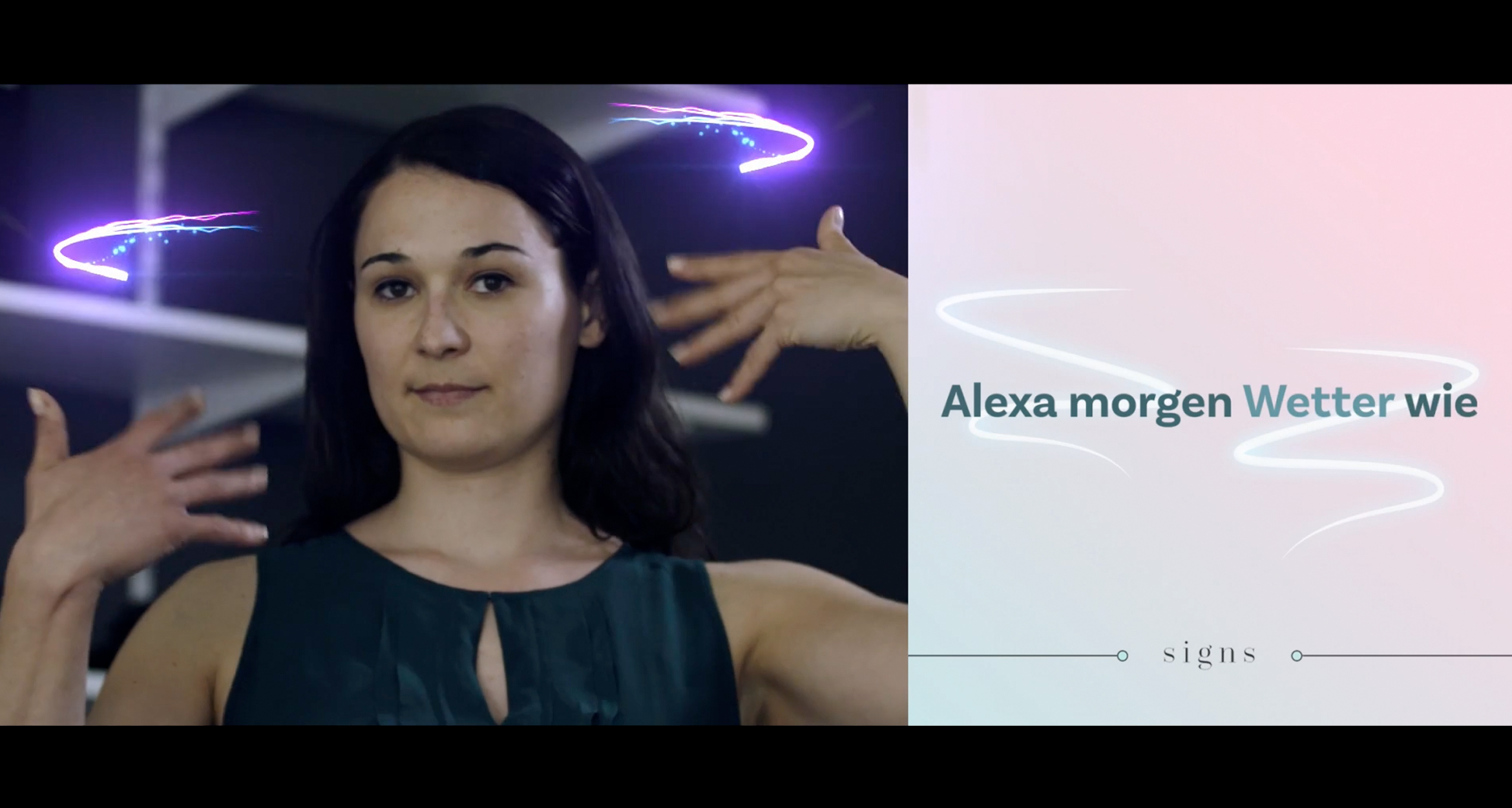

We worked right from the beginning with the German Association for People with Hearing Loss. We built several prototypes at the early stage to prove that the technology is ready and the UX is actually working. At first, we invited a few of them to train the prototype system with the sign language. And after a couple of weeks, we invited one of them again to try out the experience. And yeah, this kind of excitement of this person with hearing loss when SIGNS was actually understanding their sign language and was answering, probably it’s a first time. So this was definitely one moment I will never forget.

There are over 2 billion voice-enabled devices across the globe. According to Gartner, 30% of all digital interactions are moving toward non-screen-based actions. Voice assistants are changing the way we shop, search, communicate or even live.

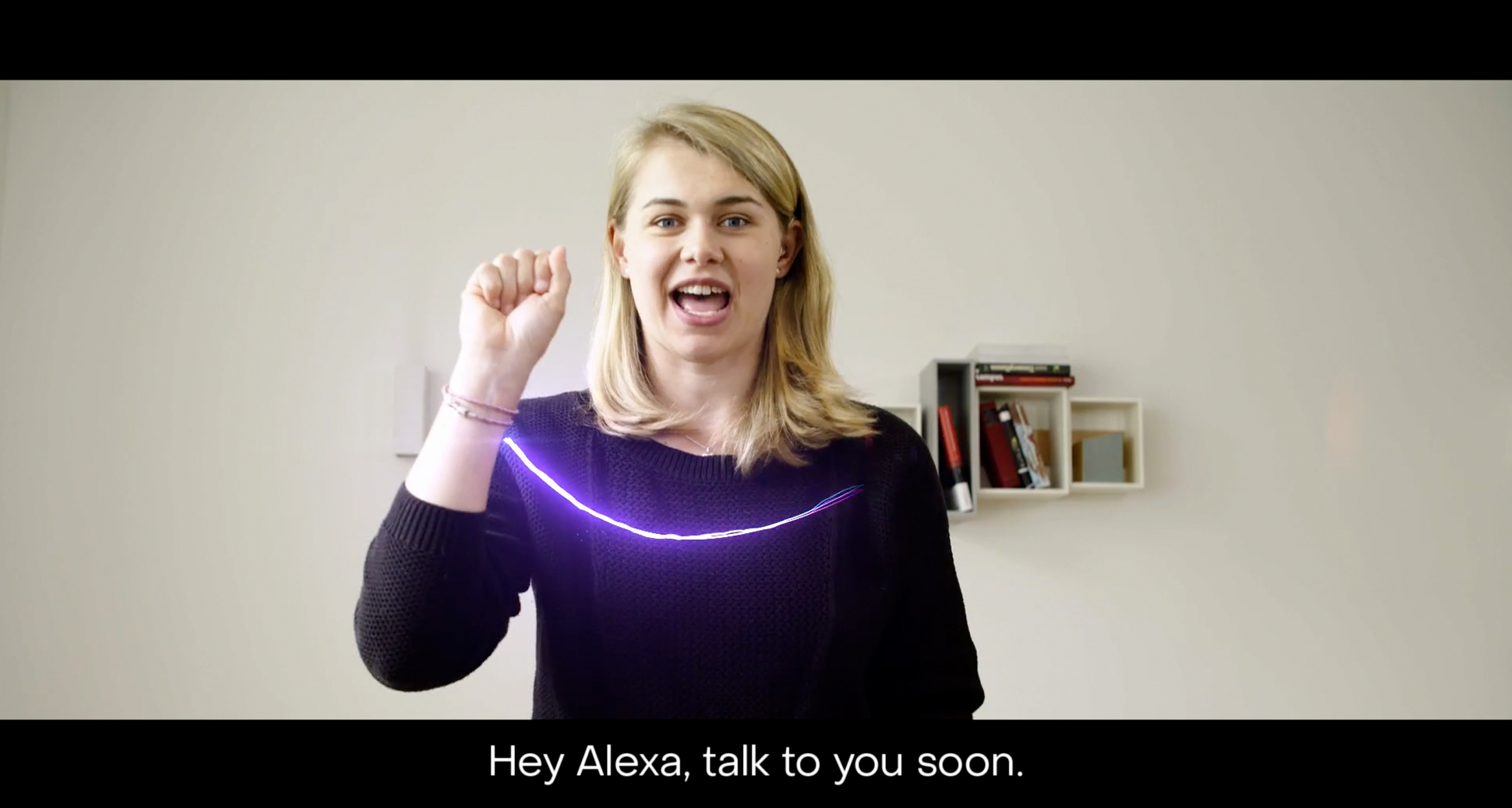

- Video 1

- Video 2

- Video 3

Voice technology is having life-changing implications for millions, so we asked ourselves a few questions:

What about those without a voice? What about those who cannot hear? And considering that around 466 million people worldwide have disabling hearing loss, how can we leverage the positive side of tech as a means to make this world more inclusive and participatory?

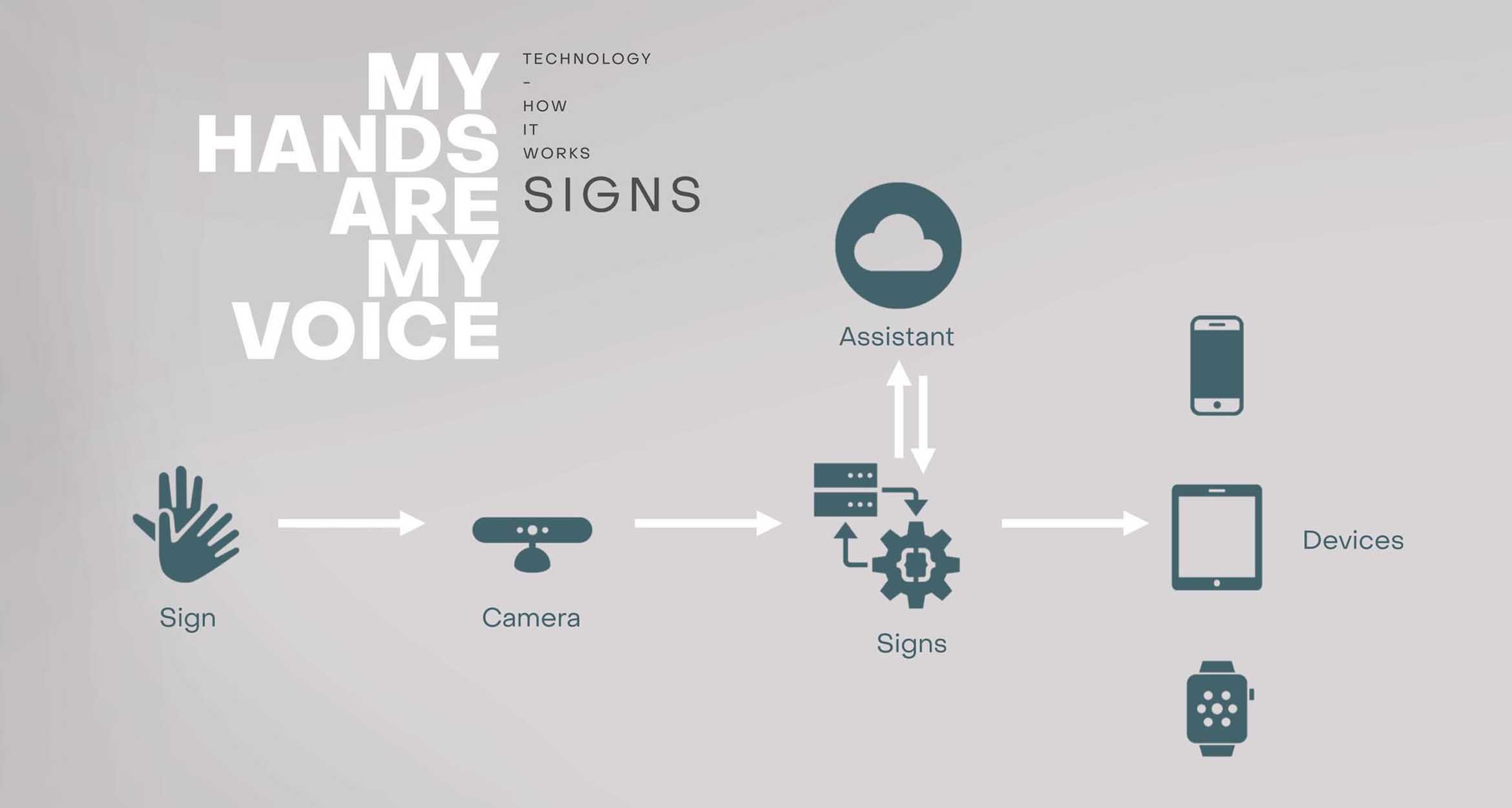

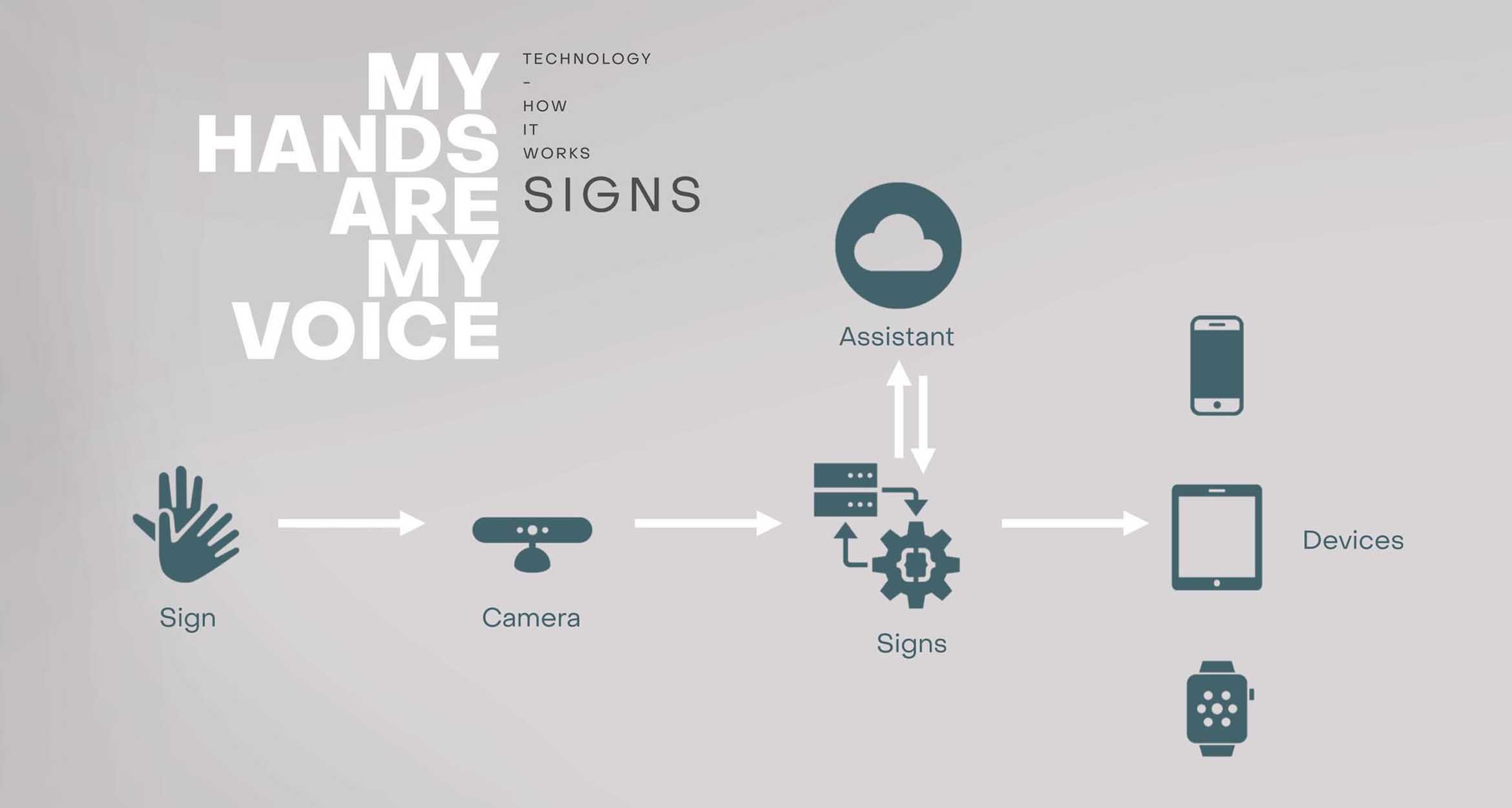

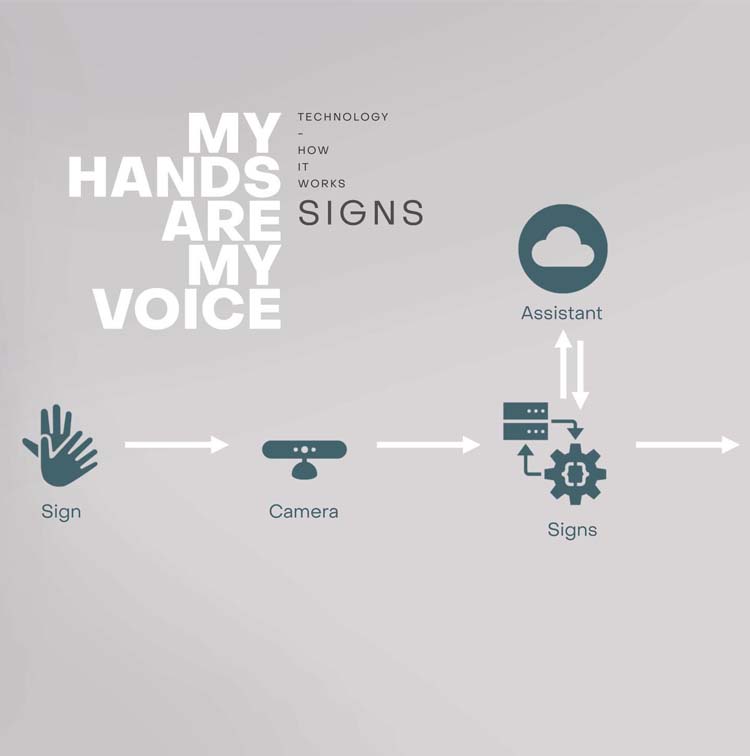

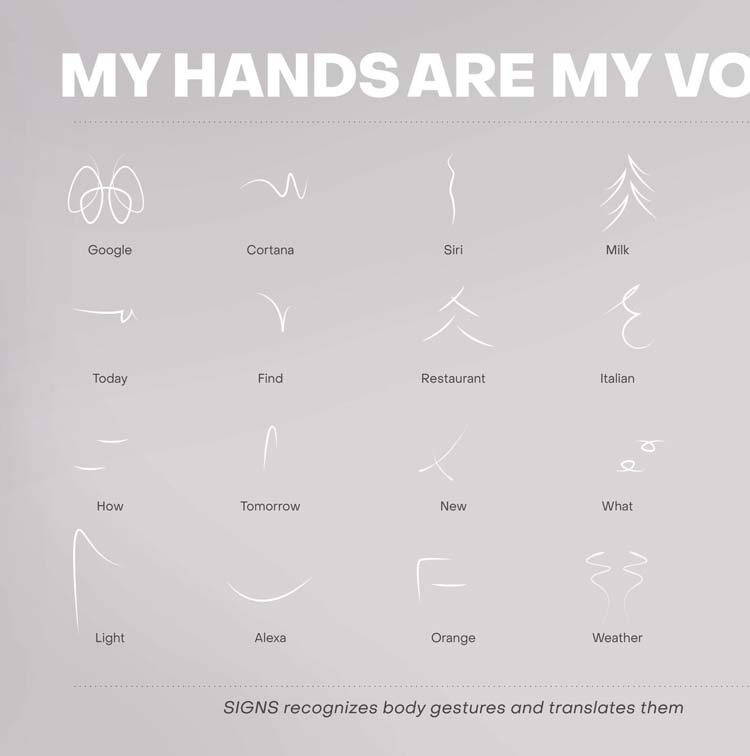

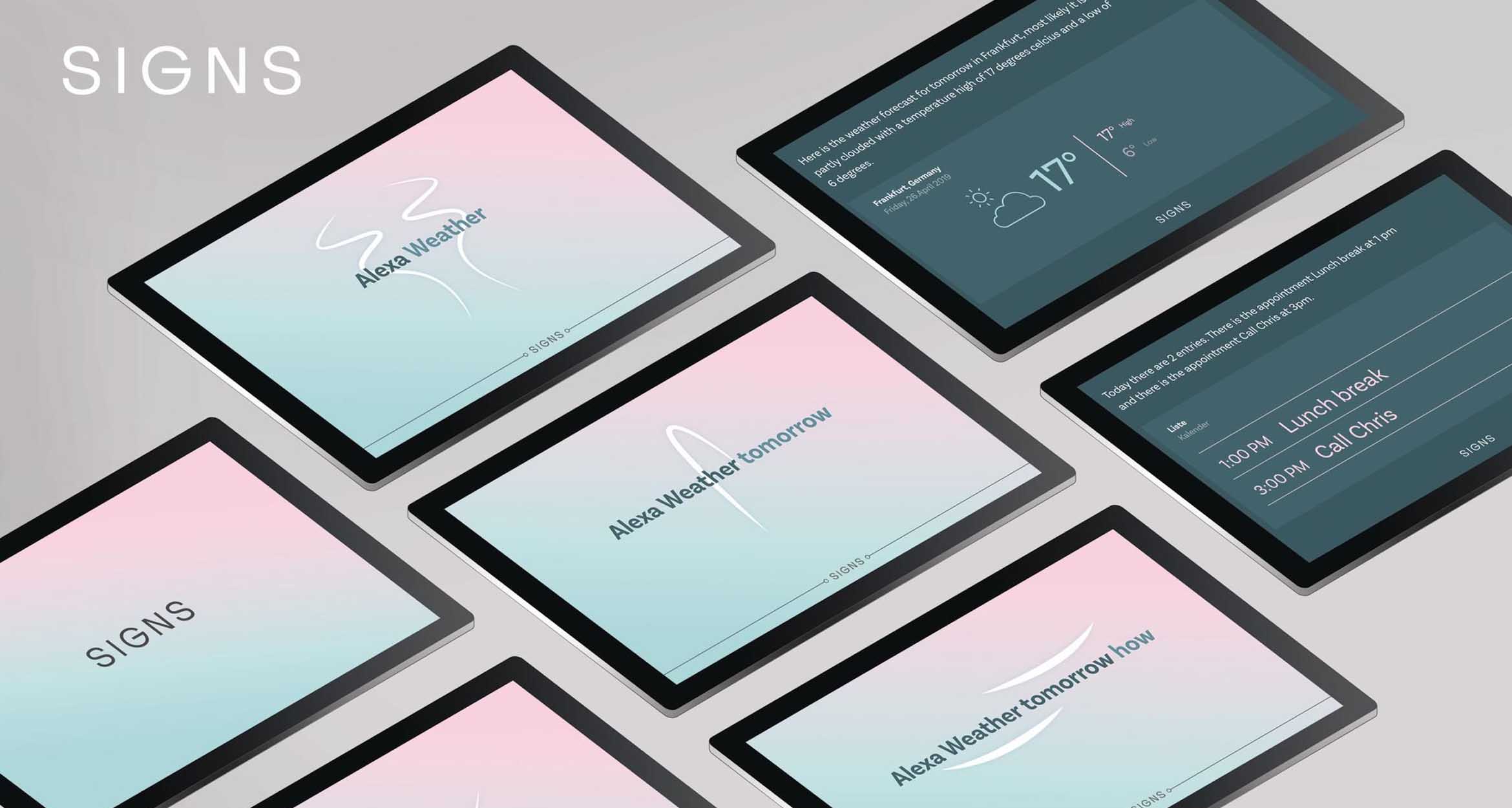

We may not have all the answers, but that didn’t stop us in developing SIGNS — an innovative smart tool that recognizes and translates sign language in real-time and then communicates directly with a selected voice assistant service.

This innovative solution allows people to utilize all the functionality of a voice assistant through hand movements, bridging an important accessibility gap between deaf people and voice technology.

- Slide 1

- Slide 2

- Slide 3

- Slide 4

SIGNS is based on a machine learning framework and uses AI to decode the complexity of sign language. The integrated camera recognizes facial muscles, hands and movements as 4D data. And it works on any browser-based operating system with an integrated camera.

How’s the weather tomorrow? Change lights to blue. Find an Italian restaurant. Just speak with your hands, and SIGNS will answer.

The importance of digital accessibility is on the rise.

What started as a social project for the German Youth Association for People with Hearing Loss has now become a globally sought-after tech innovation.

SIGNS created awareness for inclusion in the digital age as well as facilitated access to new technologies.

Never before has there been a sign language assistant of this quality — one that has the prospect of becoming a worldwide platform and can easily be accessible from all over the world, learning new signs and sign languages.

The goal is to make SIGNS available on all assistants and to all hearing-impaired people. And like audio voice assistants, SIGNS is continuously under further development in order to keep improving the accuracy and reliability of the system.